GPT-6 Launch Ignites AI Safety Debate Amidst Fierce Competition and Regulatory Calls

San Francisco, CA – The artificial intelligence landscape is once again buzzing with activity following OpenAI's recent unveiling of GPT-6, its latest flagship large language model. Heralded as a significant leap forward in AI capabilities, GPT-6 promises unprecedented advancements in reasoning, creativity, and multimodal understanding. However, its launch has simultaneously amplified a critical global discussion: how to ensure the safe and ethical development of increasingly powerful AI, especially as competitors like Google and Anthropic prepare their own next-generation models.

The New Frontier: GPT-6's Capabilities and Concerns

OpenAI, known for its pioneering work in AI, has positioned GPT-6 as a transformative tool capable of tackling complex problems previously thought beyond algorithmic reach. Early demonstrations highlight its enhanced ability to generate nuanced text, interpret intricate visual data, and even engage in more sophisticated, context-aware conversations. While these advancements hold immense potential for various sectors, from scientific research to creative industries, they also bring heightened concerns about misuse, bias, and the potential for autonomous decision-making without adequate human oversight. Critics and ethicists alike are calling for a proactive approach to understanding and mitigating these risks before they become widespread.

A Race for AI Supremacy: Google, Anthropic, and Beyond

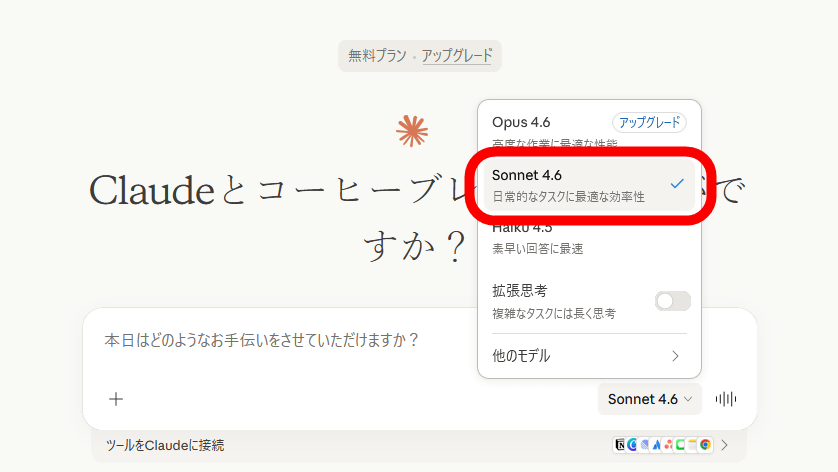

The release of GPT-6 is not occurring in a vacuum. The AI industry is in a heated race, with major players vying for technological leadership. Google, a perennial innovator in AI, is reportedly fast-tracking the development of its next iteration of Gemini, aiming to match or surpass GPT-6's capabilities. Similarly, Anthropic, founded by former OpenAI researchers, continues to refine its Claude series, emphasizing constitutional AI principles designed for safety and steerability. This intense competition, while driving innovation, also raises questions about the speed at which these powerful models are being deployed, potentially outstripping the pace of ethical deliberation and regulatory development. The pressure to release cutting-edge technology can sometimes overshadow the imperative for thorough safety vetting.

The Urgent Call for AI Regulation

The rapid evolution of AI models like GPT-6 has put regulatory bodies worldwide on high alert. Governments are grappling with the challenge of creating frameworks that foster innovation while safeguarding against potential harms. Discussions range from mandatory safety audits and transparency requirements to liability frameworks for AI-generated content and decisions. The European Union's AI Act, a landmark piece of legislation, serves as a prominent example of a comprehensive attempt to categorize and regulate AI based on its risk level. Meanwhile, the United States and other nations are exploring their own approaches, often through executive orders, voluntary commitments from tech companies, and congressional hearings. The goal is to strike a delicate balance: encouraging technological progress without compromising public trust or safety. For more insights into global regulatory efforts, the OECD's AI Policy Observatory provides valuable resources and analysis at https://oecd.ai/.

Balancing Innovation with Safety: The Path Forward

The debate surrounding AI safety and regulation is complex, involving diverse stakeholders from technologists and ethicists to policymakers and the public. Proponents of rapid innovation argue that overly restrictive regulations could stifle progress and cede leadership to less scrupulous actors. Conversely, advocates for stringent oversight emphasize that the potential societal impact of advanced AI necessitates a cautious, human-centric approach. The consensus emerging is that a collaborative effort is required, involving international cooperation, industry self-regulation, and adaptive governmental frameworks. As GPT-6 and its rivals push the boundaries of what AI can achieve, the imperative to develop these powerful tools responsibly has never been clearer. The coming months will likely see intensified discussions and potentially groundbreaking decisions that will shape the future of artificial intelligence for decades to come.

For more information, visit the official website.